New federal law targets AI deepfake porn as schools grapple with growing problem

By Derek Staahl

Published on May 14, 2026.

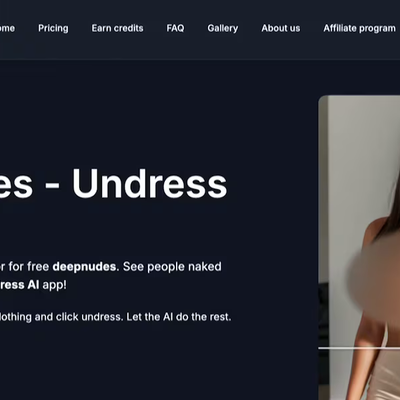

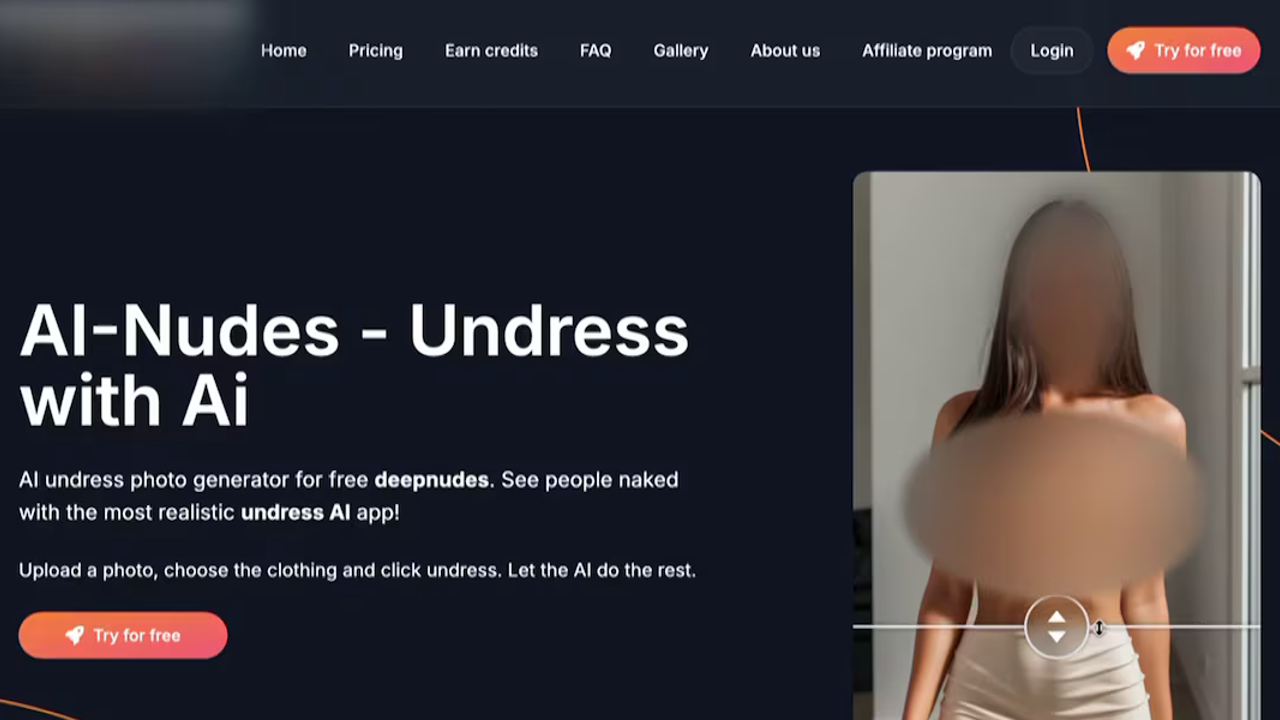

A new federal law, the Take It Down Act, takes effect next week to help victims of AI-generated deepfake porn. The law is designed to prevent the spread of non-consensual AI porn and carries penalties of up to three years in prison for posting AI deepfakes of minors with the intent to harass them or cause abuse. However, experts warn that the scale of the problem is already daunting. An investigation by Wired found that students in at least 28 countries have been victimized by "nudify" apps, which can turn an innocent photo into explicit content. In Arizona, three women in their early 20s have filed a lawsuit alleging profiteers stole their real photos and turned them into sexual content. Sarah Grado, CEO of the nonprofit Not My Kid, said that Arizona schools and parents are already raising concerns about students using AI on their personal cell phones or school tablets. She also suggested that parents should make sure they know which apps and devices their children can access.