Judge sides with Anthropic to temporarily block the Pentagon’s ban

By Hayden Field

Published on March 27, 2026.

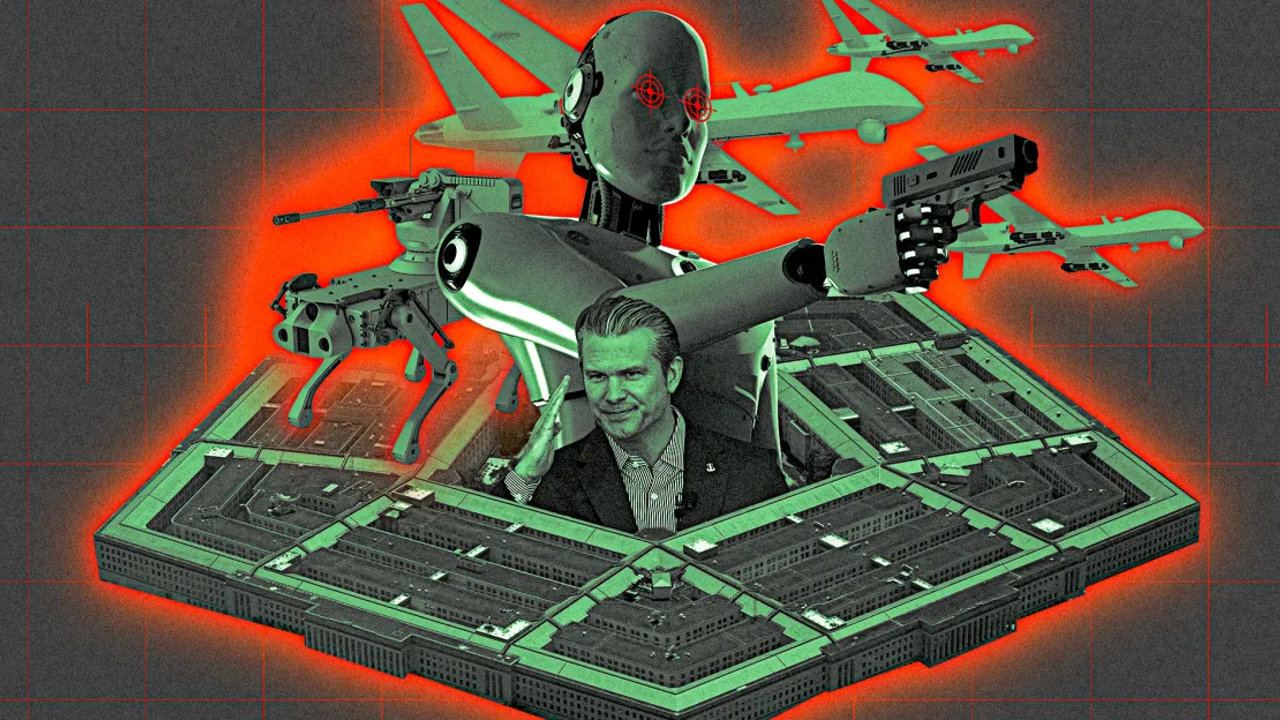

A judge has temporarily blocked the Pentagon's ban on the use of Anthropic's AI product, Claude, for autonomous lethal weapons and domestic mass surveillance. The company argues that its technology is not safe to use for these purposes, while the Department of War argues that military commanders have to decide what is safe for its AI to do. The judge ruled that the government is free to stop using Claude and find a more permissive AI vendor. The ban has led to a flurry of controversy, including social media insults, a formal “supply chain risk” designation, and a lawsuit against Anthropic. Depending on the level to which the government prohibits its contractors' work with Anthropic, the company alleges that revenue could be at risk. The Department of Defense alleged that Anthropic could attempt to disable its technology or alter its ongoing behavior during war or during a war.