Arizona State University researcher warns against overtrusting AI in Iran strikes

By Derek Staahl

Published on April 2, 2026.

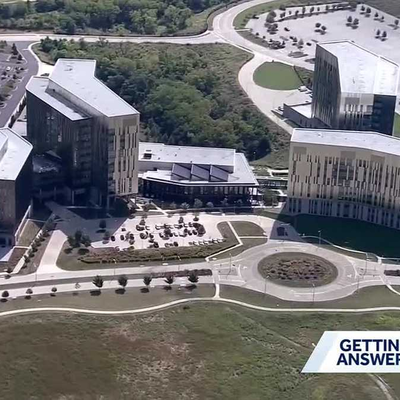

Arizona State University researcher, Nancy Cooke, director of ASU’s Center for Human, AI, and Robot Teaming, has warned against overtrusting AI in Iran strikes, which resulted in the death of about 170 people, mostly children. The Pentagon's AI-powered battlefield intelligence system, the Maven Smart System, can compress targeting decisions from days or hours into minutes. Cooke argues that AI is unreliable and humans tend to overtrust it due to its tendency to over-trust it. She also highlighted the risk of over-reliance on AI recommendations, citing large language models that appear human-like but are not like human intelligence. Cooke's research on simulated drone pilot teams found that AI performed its assigned tasks flawlessly while simultaneously making humans perform worse. Despite AI being fast and effective, the combination of AI working with humans may be slow and bad, she warns. Cooke also highlighted that too much information creates paralysis and outcomes, leading to the opposite outcome of what AI promises to deliver.